Oct 9, 2025

Improving NAb Assay Sensitivity and Drug Tolerance by Optimizing MRD

Adjusting Minimal Required Dilution (MRD) from 1:4 to 1:2 in a competitive ligand binding assay improved NAb assay sensitivity 4-fold and drug tolerance 5-fold. See the data and methodology.

\

\

Neutralizing antibody (NAb) assays are essential tools for evaluating the immunogenicity of biotherapeutic proteins. NAb assay development and validation require careful attention to every parameter in the workflow, and among the different assay formats, Competitive Ligand Binding Assays (cLBA) are widely used in early-phase studies for therapeutics with a lower immunogenicity risk.

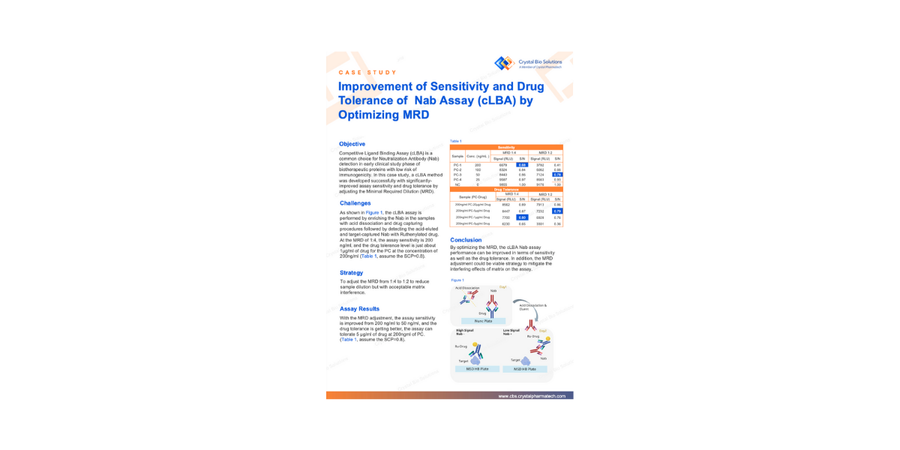

There is one parameter in competitive ligand binding assay (cLBA) design that often gets locked in early and rarely revisited: the Minimal Required Dilution (MRD). In a recent case study at Crystal Bio Solutions, adjusting the MRD from 1:4 to 1:2 improved positive control (PC) detection from 200 ng/mL to 50 ng/mL and raised drug tolerance from 1 µg/mL to 5 µg/mL without introducing unacceptable matrix interference.

This article covers what MRD is and why it matters, how the optimization was designed, what the data showed, when this strategy applies, and what it means for your IND submission.

What MRD Actually Controls in a NAb Assay

MRD is the lowest sample dilution at which the biological matrix (serum, plasma...) stops interfering with the assay signal at an unacceptable level. It is essentially the floor of your sample preparation.

-

Set MRD too high and you over-dilute the sample. The matrix interference is well controlled, but so is the analyte. You end up chasing low-titer antibody responses with less signal than you need.

-

Set MRD too low and background noise creeps up, and you risk false positives or an unstable cut point.

In a cLBA specifically, MRD also shapes drug tolerance. The assay works by competing patient-derived neutralizing antibodies (NAbs) against a labeled drug for the same target. When circulating drug is present in the sample, it competes with the detection system. At MRD 1:4, that drug gets diluted, which helps, but is not always enough. In early clinical phases, free drug concentrations in patient samples can easily sit above 1 µg/mL, and at that level, a standard MRD 1:4 cLBA starts producing false negatives. The drug wins the competition, the signal drops below the cut point, and a NAb-positive sample gets misclassified.

That is the problem MRD optimization addresses directly.

Our cLBA NAb Assay Performance

The NAb sample testing workflow in this case study was developed for NAb detection in a biotherapeutic protein program with low immunogenicity risk. The cLBA workflow ran as follows: acid dissociation to release NAbs from circulating drug complexes, drug capture on a Nunc Plate, acid elution of the captured NAbs, and electrochemiluminescent detection using a Ruthenylated drug on an MSD High Bind (HB) Plate.

At MRD 1:4, with screening cut point (SCP) set at 0.8 S/N ratio, the assay showed a sensitivity of 200 ng/mL and a drug tolerance of approximately 1 µg/mL of co-administered drug at 200 ng/mL PC.

For a program where drug concentrations at sampling time stay low, these numbers are workable. For most early-phase biologics programs, they are not. Drug levels at the time of sample collection frequently exceed 1 µg/mL, which means the assay tolerance threshold is right at the edge of what the clinical situation demands. One patient with slightly higher drug exposure produces a false negative.

How to Optimize MRD in a cLBA NAb Assay

The approach was straightforward: reduce the MRD from 1:4 to 1:2. Only the dilution factor changed, everything else stayed the same: acid dissociation conditions, drug capture protocol, Ruthenylated drug concentration, MSD platform.

A lower MRD means less dilution, which means more NAb in the detection step and more drug in the sample. Sensitivity should improve because there is more analyte. Drug tolerance should also improve because the relative contribution of drug interference decreases when the NAb signal itself is stronger. The risk is that matrix components also concentrate, potentially raising background noise above acceptable levels.

That is the tradeoff the validation was designed to assess.

The Results: Four-Fold Sensitivity Gain, Five-Fold Drug Tolerance Gain

The MRD 1:2 optimization delivered meaningful improvements across both sensitivity and drug tolerance, confirmed across multiple positive control concentrations and drug spike levels on the MSD electrochemiluminescence platform.

The full dataset, including signal-to-noise ratios at each PC concentration and drug tolerance levels across the tested range, is available in the complete case study.

Download the full case study to see the data.

When This Strategy Works and When It Does Not

MRD optimization is worth considering in two situations. First, when your sensitivity data shows the assay is struggling to detect low-titer responses that are clinically relevant for your program. Second, when your PK data or population modeling tells you that drug levels at your sampling timepoints will regularly exceed the baseline tolerance threshold.

It is less useful in a few scenarios. If matrix interference is already elevated at baseline, reducing MRD further will likely push background signal above acceptable limits. If you are running a cell-based NAb assay, the considerations are different: cytotoxicity and non-specific cellular effects at low dilution create their own complications that MRD alone cannot resolve. And if the drug has specific physicochemical interactions with matrix components, dilution factor alone may not be the governing variable.

One important operational point: changing the MRD means re-establishing the screening cut point. The SCP is derived from the distribution of background signal in a representative donor population, and that distribution shifts when you change sample preparation conditions. The cut point from MRD 1:4 does not carry over to MRD 1:2. That revalidation step is not optional.

If you are weighing whether MRD adjustment is the right move for your assay, reach out to talk through your sensitivity and PK data with our team.

NAb Assay Validation and Regulatory Expectations

FDA and EMA both require that NAb assays validation demonstrate sensitivity and drug tolerance appropriate to the clinical program they support. Neither agency mandates a specific MRD, but both expect the choice to be justified with validation data.

In practice, that means your bioanalytical method report should state the MRD, explain why it was selected, and present the sensitivity and drug tolerance data that support it. If you changed MRD specifically to address a drug tolerance problem, that reasoning should be explicit. Reviewers will ask.

The data from this case study provides a well-documented example of that justification: a defined problem at MRD 1:4, a targeted single-variable change to MRD 1:2, validation confirming the expected improvement, and confirmation that matrix interference remained controlled. That is the structure a solid bioanalytical report follows.

For programs that require comprehensive ADA and NAb detection strategies, CBS integrates immunogenicity assay design with broader bioanalytical planning from the earliest development stages. Learn more about our bioanalytical services.

Learn more about our bioanalytical services.

Key Takeaways

-

Adjusting MRD from 1:4 to 1:2 improved sensitivity from 200 ng/mL to 50 ng/mL and drug tolerance from 1 µg/mL to 5 µg/mL in a cLBA NAb assay.

-

Matrix interference did not increase meaningfully at the lower dilution, confirming the optimization was viable for this assay system.

-

MRD is one of the more accessible levers in cLBA design, but any change requires cut-point re-establishment and full revalidation before regulatory submission.

-

The strategy is most applicable when drug PK data indicates clinical samples will be collected at drug concentrations that exceed baseline assay tolerance.

📥📎 Access the case study

Frequently Asked Questions

What is MRD in a NAb assay?

MRD is the lowest sample dilution at which matrix interference is acceptably controlled. It governs both how sensitive the assay can be and how much circulating drug it can tolerate before producing false-negative results.

Why does MRD affect sensitivity?

Higher MRD means more dilution, which means less analyte at the detector. Reducing MRD from 1:4 to 1:2 concentrates the sample and increases the signal from low-titer NAb responses that would otherwise fall below the detection threshold.

What is drug tolerance in a NAb assay?

Drug tolerance is the highest drug concentration at which the assay still correctly classifies a NAb-positive sample. When circulating drug exceeds tolerance, it outcompetes the detection system and suppresses the signal into false-negative territory. In this study, tolerance improved from 1 µg/mL to 5 µg/mL after MRD adjustment.

When should MRD optimization be considered?

When baseline sensitivity is insufficient for the expected ADA titer range, or when PK data indicates clinical drug levels will regularly exceed the assay tolerance threshold at the planned sampling timepoints.

Does reducing MRD always improve performance?

Not always. Lower dilution increases analyte signal but also concentrates matrix components. If background noise rises above acceptable limits, the sensitivity gain can be offset by cut-point instability or false positives. Validation is required to confirm the tradeoff is favorable.

Is this approach applicable to cell-based NAb assays?

MRD adjustment as described here applies to cLBA formats. Cell-based assays involve different interference mechanisms that require separate optimization strategies. This includes cytotoxicity and non-specific cellular activation.

What regulatory guidance is relevant here?

The FDA 2019 immunogenicity guidance and EMA guideline on immunogenicity assessment of therapeutic proteins both address NAb assay qualification requirements, including sensitivity, drug tolerance, and cut-point requirements. Neither specifies a required MRD, but both expect the choice to be justified with validation data.

What happens to the cut point after an MRD change?

The screening cut point must be re-established. It is derived from the signal distribution across a representative donor population, and that distribution changes when sample preparation conditions change. Carrying over the cut point from a previous MRD condition is not scientifically defensible.

References

-

U.S. Food and Drug Administration. Immunogenicity Testing of Therapeutic Protein Products — Developing and Validating Assays for Anti-Drug Antibody Detection. FDA Guidance for Industry, 2019. Available at: www.fda.gov

-

European Medicines Agency. Guideline on Immunogenicity Assessment of Biotechnology-Derived Therapeutic Proteins. EMA/CHMP/BMWP/14327/2006 Rev 1. Available at: www.ema.europa.eu

-

Shankar G, et al. Recommendations for the Validation of Immunoassays Used for Detection of Host Antibodies Against Biotechnology Products. Journal of Pharmaceutical and Biomedical Analysis. 2008;48(5):1267–1281.

-

Mire-Sluis AR, et al. Recommendations for the Design and Optimization of Immunoassays Used in the Detection of Host Antibodies Against Biotechnology Products. Journal of Immunological Methods. 2004;289(1-2):1–16.

Article written by Crystal Bio Solutions Scientific Marketing Team. March, 2026.